MMS, revisited

By Kevin Collins

We recently had the privilege of attending the International Field Directors and Technology Conference in Providence, Rhode Island, where we presented an update to ongoing research on the use of MMS in text surveys. Longtime readers of this newsletter know this is a topic we have covered before, and that we generally recommend not using brand images within text messages.

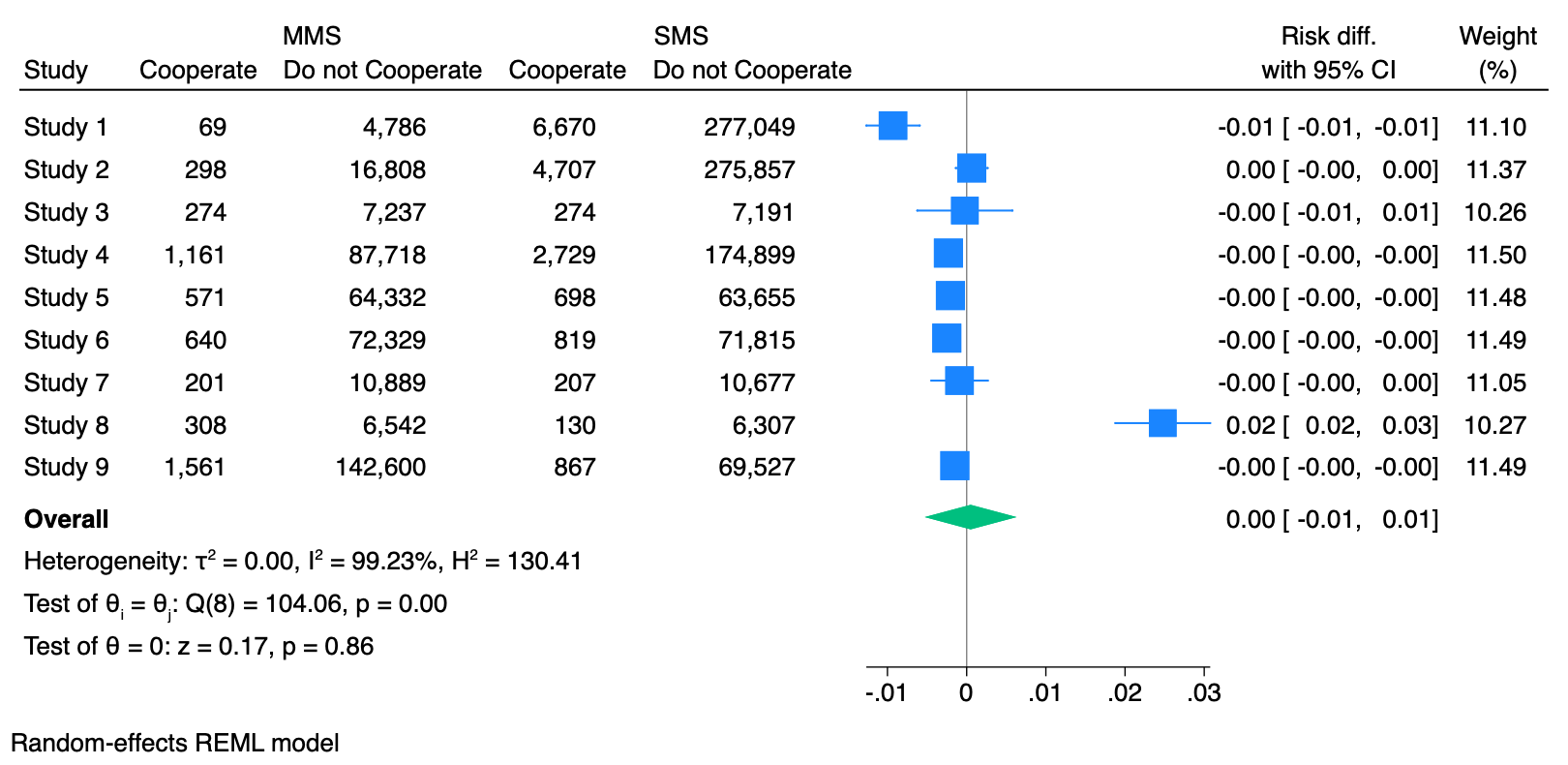

The steady drumbeat of vendors pitching MMS as a value-add — and charging more for the privilege — makes the question worth revisiting with a larger evidence base. We now have a random-effects meta-analysis spanning nine experiments across 1.1 million message recipients. With that data, the picture is clearer than it was a year ago: in almost every condition, MMS underperforms SMS, with one particularly striking exception.

A quick refresher on the technology. SMS, the standard short message format, carries up to 160 characters per segment using the GSM character set (70 with Unicode). Segments can be concatenated to produce messages of up to 1,600 characters that appear to the recipient as a single message. MMS, by contrast, can carry images, GIFs, or short audio or video clips alongside text, and it can send up to 1,600 characters within a single segment. But the file-size budget is tight: 500 KB on the most restrictive carrier (US Cellular). Many aggregators, the services that sit between platforms like ours and the carriers, enforce the lowest of these limits across the board, which means in practice that users works with a few hundred kilobytes for the entire message. Users do not send a movie; they send a small image plus some text.

So why do people use MMS at all? In our reading of how the MMS is pitched, four explanations recur. The first, deliverability, used to be a genuine consideration. There was a time when MMS could be sent through unregistered traffic, bypassing the carriers' 10DLC vetting system — but that loophole closed years ago. The second, the longer per-segment character limit, matters mainly for platforms designed around a single blast message; it matters less in our setup, where we use a multi-message protocol that asks respondents to participate first and only engages those who agree. The third — that images grab attention – and forth – that an image confers credibility on the sender — are testable, and they are what most of our experiments have been designed to interrogate.

Our basic experimental setup is simple. We randomize text-to-web survey invitations to receive an SMS-only message or an otherwise identical message that includes an image, and compare cooperation rates (the share of contacted respondents who agree to participate) and completion rates (the share who complete the survey). Across nine such experiments, we have tested branded logos from the surveying organization, professionally designed infographic-style virtual flyers, a government logo, and, in our most recent internal survey, an animated GIF and a meme.

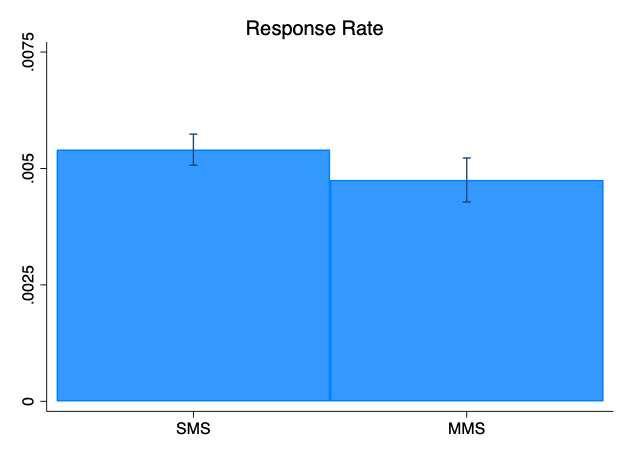

The brand-logo result is the easiest to summarize. In an internally funded survey we fielded in October 2024 in Arizona, North Carolina, and Pennsylvania, the MMS arm — identical to the SMS arm except that it carried the surveying organization's brand — produced a statistically significantly lower cooperation rate (p<0.001) and a meaningfully lower overall response rate (p<0.05). The effect was bad enough that we pulled the experiment after a few days because it was hurting the survey. Similar tests we have run with named, in-state university clients — organizations whose brands ought to be familiar and welcome to local respondents — produced null or negative results in the same direction.

Infographics, the next-most-pitched approach, did not help either. In a national registered-voter sample fielded in late August 2025, we tested two professionally designed infographic-style virtual flyers.

The two designs performed indistinguishably from each other — so we present them as a combined arm — and worse than the text-only image in text-to-web and made no difference in the live interviewer “interactive” arm of the survey.

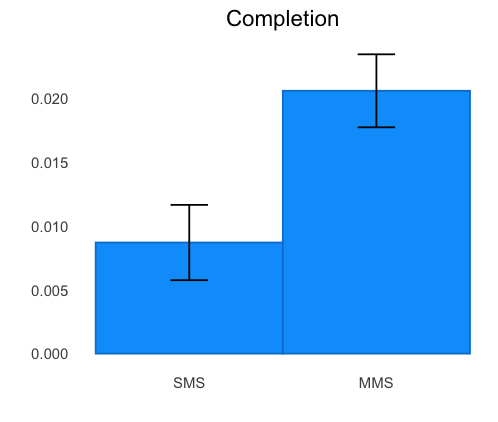

The exception, and the most interesting result in the set, came from a project we ran for a local county government, through an intermediary client. Here the "brand" was the county government's own logo, deployed in a survey of county residents. The MMS arm produced a statistically significant lift in cooperation rate, and — more strikingly — more than doubled the completion rate relative to SMS-only.

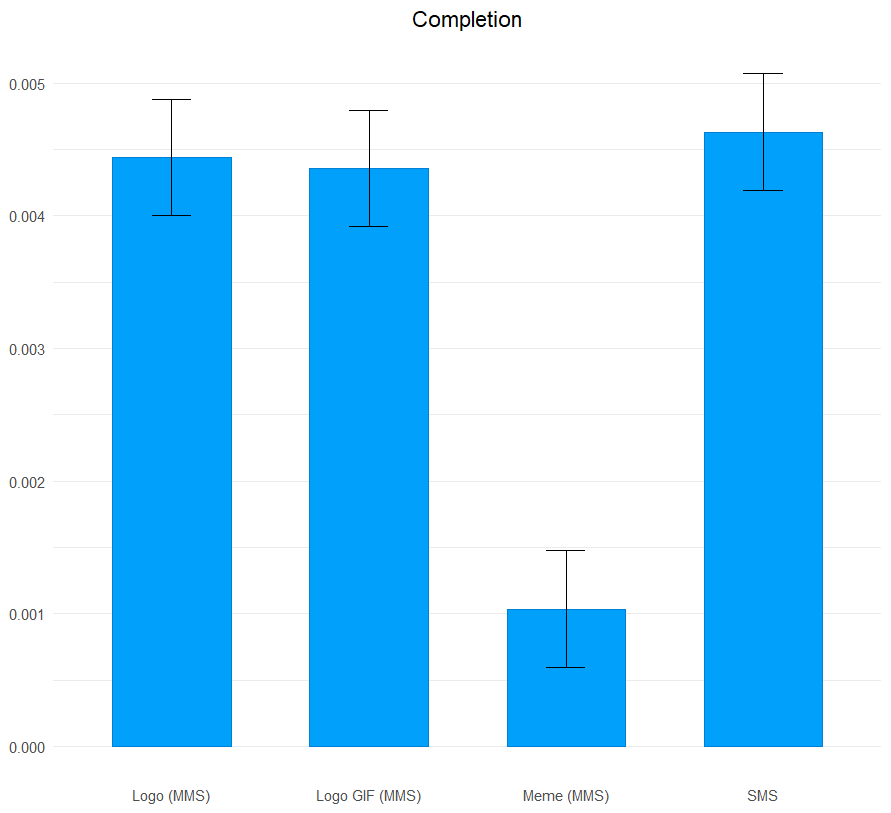

Earlier this month, on our April 2026 internal tracking poll, we ran one more experiment to test whether motion or humor changes the picture. We fielded four arms: SMS-only, a still Survey 160 logo, a simple spinning Survey 160 logo (an animated GIF), and a "computer kid" meme captioned with an explicit "take the survey" message.

The two logo arms — static and spinning — performed about equally and slightly worse than SMS, which is itself instructive. Motion did not buy us anything, and on many phones the recipient has to tap to play an animation, so a "spinning" logo often arrives looking exactly like the still one. The meme arm was the surprise of the test, in that it substantially reduced both cooperation and completion. Apparently, not everyone shares our survey-centric sense of humor.

A few caveats are worth noting. First, "MMS versus SMS" is always a comparison against some SMS strategy, and we have spent considerable effort optimizing ours. Researchers who find MMS outperforming their SMS head-to-head should ask whether the result is really about MMS or about an unoptimized SMS condition. Second, our multi-message protocol — ask first, send the link second — means we are rarely under pressure to cram everything into a single message, which is one of the stronger arguments for MMS on platforms designed around single blasts; teams using those platforms may face a different cost calculus. Third, the space of possible images and creative treatments is wide, and we have not tested everything. There are image strategies we have not yet tried, and we will continue to experiment.

Where does this leave us? Our recommendation, from last year's post, holds: avoid MMS by default, and if you are considering it for a particular project, test it against a best-practices SMS approach before paying for it. The one finding worth taking seriously in the other direction is the government-logo result, which we suspect is less about "image" than about "trusted institutional sender" — a hypothesis we plan to test further. If you are running surveys for a government and would like to add another experiment to our meta-analysis, or if you believe your particular logo would beat the average, please reach out at info@survey160.com and we can experiment together.